Google Warns of AI-Powered Cyber Threat Surge as Hackers Weaponise Generative Models

The report also highlighted growing attacks targeting AI systems and software supply chains themselves.

Google has revealed a sharp escalation in the use of artificial intelligence by cybercriminals and state-backed hackers, warning that generative AI is rapidly evolving from an experimental tool into a core part of modern cyber warfare.

In a new threat intelligence report, Google’s Threat Intelligence Group (GTIG) said threat actors are increasingly using AI to discover vulnerabilities, generate malware, automate attacks, and scale disinformation campaigns. The report also highlighted growing attacks targeting AI systems and software supply chains themselves.

One of the most significant findings was the discovery of what Google believes to be the first AI-assisted zero-day exploit developed by cybercriminals. According to the report, the exploit was designed to bypass two-factor authentication in a popular open-source administration tool and was intended for mass exploitation before Google intervened.

"Our analysis of exploits associated with this campaign identified a zero-day vulnerability implemented in a Python script that enables the user to bypass two-factor authentication (2FA) on a popular open-source, web-based system administration tool," the report reads.

GTIG said threat groups linked to China and North Korea are increasingly using AI for vulnerability research and exploit development. Researchers observed hackers using advanced prompting techniques, specialised datasets, and autonomous AI frameworks to identify software flaws and generate proof-of-concept exploits at scale.

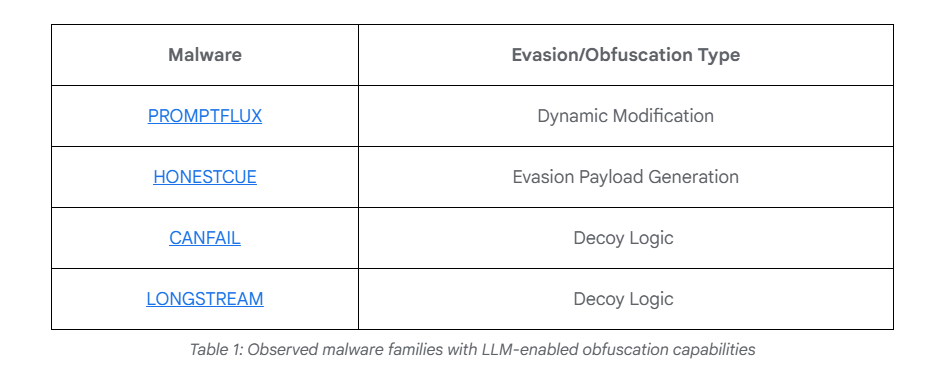

The report also detailed the rise of AI-driven malware capable of autonomous decision-making. One Android backdoor, dubbed PROMPTSPY, reportedly used Google’s Gemini API to interpret user interfaces, execute commands, and even bypass uninstall attempts without human intervention.

Google further warned that adversaries are industrialising access to large language models through automated account-generation pipelines, proxy infrastructure, and account-pooling systems to evade safety restrictions and usage limits.

The company added that while AI-related threats are growing rapidly, proactive security research and stronger safeguards can help prevent large-scale abuse of generative AI systems.