Meta Unveils New RISC-V MTIA Chips to Power AI Inference

The company revealed plans to release four MTIA generations within two years, a pace that reflects the rapid evolution of AI systems and the computing infrastructure needed to support them.

Meta has unveiled the next generation of its custom artificial intelligence chips, part of a broader effort to power AI experiences across its platforms used by billions of people worldwide.

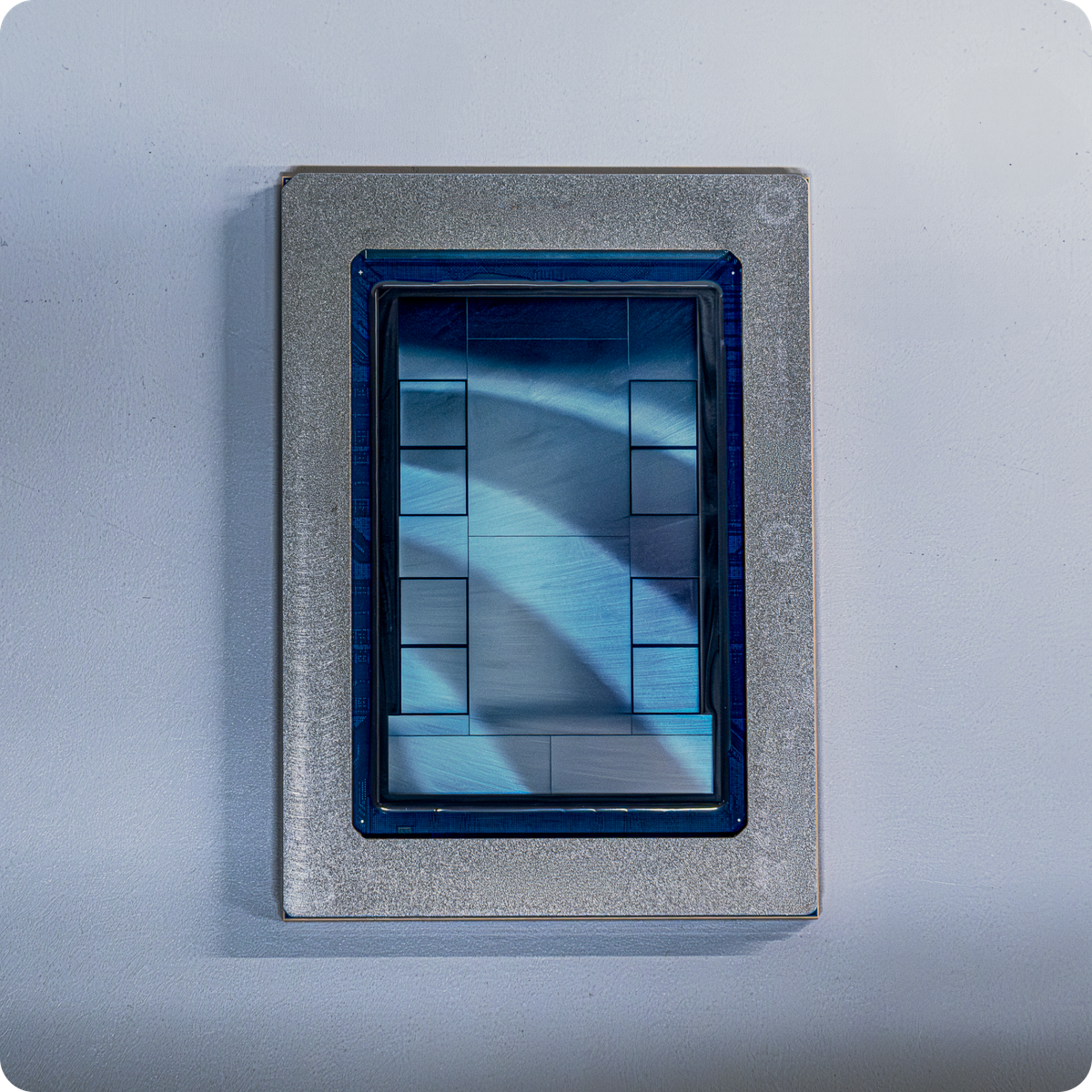

The new processors belong to Meta’s Meta Training and Inference Accelerator (MTIA) family, a line of in-house chips designed to support the company’s growing AI workloads, including recommendation systems, generative AI features and large language models.

The company revealed plans to release four MTIA generations within two years, a pace that reflects the rapid evolution of AI systems and the computing infrastructure needed to support them.

One of the chips, MTIA 300, is already in production and is optimised for ranking and recommendation models that determine what users see in feeds and advertisements across Meta apps. Additional chips—MTIA 400, 450 and 500—are designed to handle AI inference workloads, the process of running trained models to generate outputs such as text, images or recommendations.

The upcoming MTIA 450 and MTIA 500 processors will include significantly higher high-bandwidth memory capacity to support larger and more complex AI models. These chips are expected to roll out gradually through 2027, enabling Meta to scale AI systems more efficiently as demand grows.

Meta said the MTIA programme plays a critical role in cost-effectively powering AI experiences for billions of users, particularly as the company expands generative AI capabilities across products such as Facebook, Instagram and WhatsApp.

The chips are developed in collaboration with semiconductor partners including Broadcom and manufactured by TSMC, using the open-source RISC-V architecture.

"Since introducing MTIA 100 and 200, we have accelerated MTIA development across four successive generations: MTIA 300, 400, 450, and 500. These new chips have either already been deployed or are scheduled for deployment in 2026 or 2027, expanding workload coverage from ranking and recommendation (R&R) inference to R&R training, general GenAI workloads, and GenAI inference with targeted optimisations," Meta said.

By accelerating its custom silicon roadmap, Meta aims to reduce dependence on external GPUs while building specialized hardware tailored to its massive AI infrastructure. The initiative also reflects a broader trend among major tech companies developing proprietary chips to meet the escalating compute demands of advanced AI systems.